Inside the Computer: Hardware Composition and Program Data Processing

1️⃣ Composition of computer hardware

2️⃣ Processing of program data

1️⃣ Composition of computer hardware

- Four Major Components of a computer

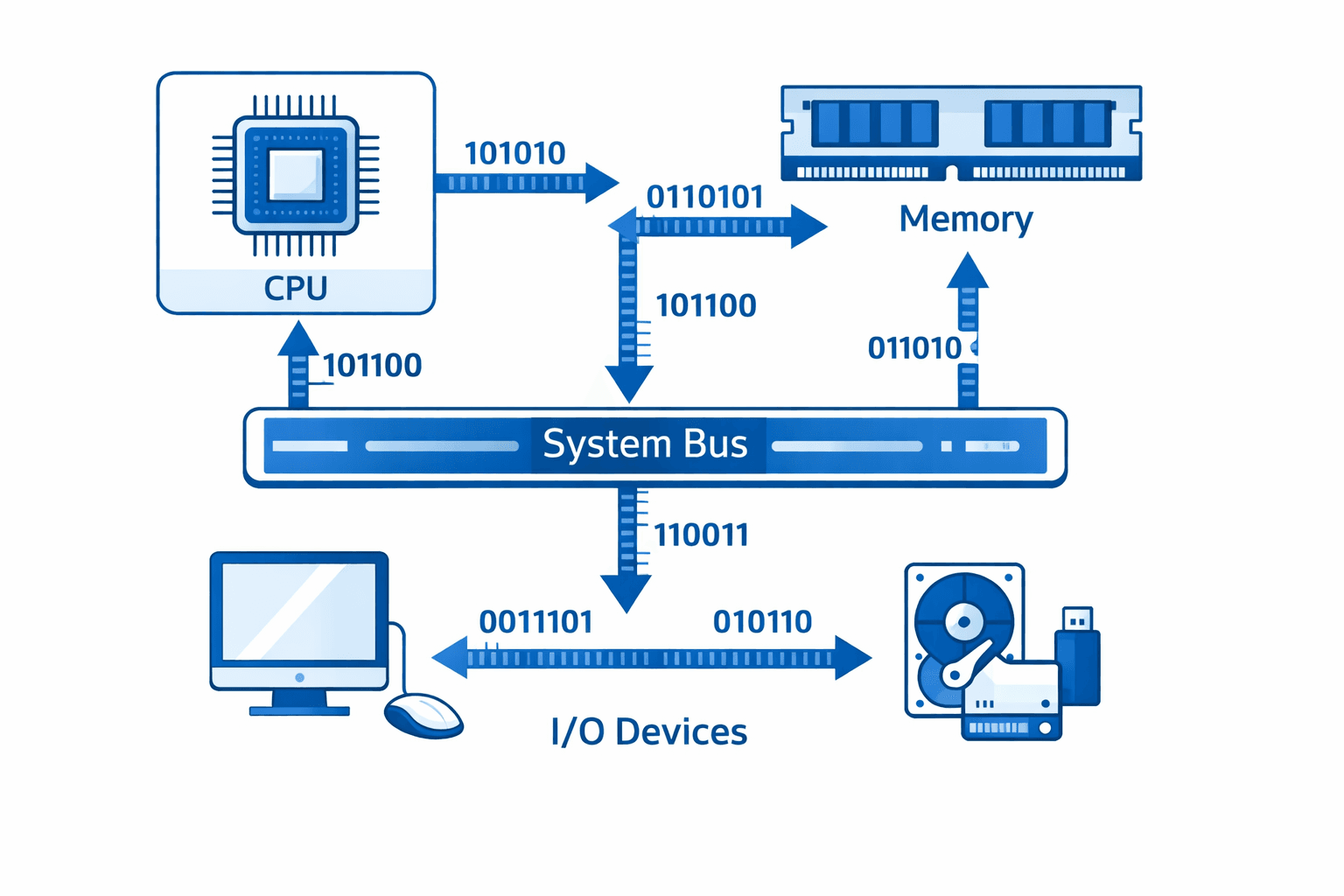

: Processor (CPU), Memory, System Bus, and I/O Devices. All components are connected through the system bus and operate together as a single system.

- CPU: The central unit that performs calculations and processes instructions Memory; A space where programs and data needed during CPU operations are temporarily stored. The CPU can only directly process data that is loaded into memory System Bus; A common pathway through which the CPU, memory, and I/O devices exchange data I/O Devices; All devices used to interact with the outside world, including keyboards, mice, disks, monitors, and network devices.

Hardware Connection Structure Here is a structural overview of how each component is actually connected.

Inside the CPU: Composed of the ALU, registers, and control unit, it not only performs calculations but also controls the order and timing of instructions

Memory (RAM): Holds currently running programs and data. The CPU exchanges data with memory through the system bus

I/O Devices: Not directly connected to the CPU, but indirectly connected through an I/O controller

The most critical point is that all components are connected through the system bus, and all instruction delivery and addressing takes place through this pathway. The operating system uses this structure to utilize the CPU, allocate memory, and control I/O devices.

Von Neumann Architecture This is the most representative computer architecture followed by the majority of computers today. Two Most Important Characteristics First, the CPU, memory, I/O devices, and storage devices are all connected through a single bus.

Second, programs are stored in memory just like data. Because the CPU interprets the contents of memory as instructions and executes them, a program must be loaded into memory before it can run.

Why Is This Important? This is because the role of the operating system, which will be covered later, is centered around this memory. The operating system regulates execution order, allocates resources, and manages the system based on the programs loaded into memory.

Key Perspective Rather than focusing on the historical background of Von Neumann, the goal is simply to understand the concept that "a computer executes programs centered around memory."

2. Processor(CPU) and Registers

- Processor (CPU): The CPU is the entity that actually executes programs stored in memory. It is the central unit in a computer that executes program instructions, interpreting commands stored in memory, performing the necessary operations, and controlling the overall flow of execution. The CPU also directly retrieves programs and data stored in memory, processes them, and stores the results back in memory. In other words, if memory is the space that stores programs, the CPU is the entity that actually executes them.

Components of the Processor: The CPU is composed of three elements. Registers, ALU (Arithmetic Logic Unit), and the control unit; each playing a different role in processing a single instruction.

Registers are extremely fast temporary storage spaces inside the CPU that temporarily hold data and instructions retrieved from memory, as well as intermediate results of operations. Registers are the reason the CPU can work so quickly.

The ALU (Arithmetic Logic Unit) is the part responsible for actual calculations, performing arithmetic operations such as addition and subtraction, as well as logical operations such as comparisons.

The Control Unit decides which instruction to execute and directs the registers and ALU on when and how to operate through control signals. It manages the overall flow through the internal bus.

In summary, registers handle storage, the ALU handles computation, and the control unit handles control; these three elements work together to execute a single instruction.

Registers are the storage space the CPU uses at the moment it executes an instruction. Values needed for execution, data required for operations, and intermediate results produced during calculations are all temporarily stored here. Because it would take too long for the CPU to travel back and forth to memory every time it works, information that is immediately needed is loaded into registers for processing.

Registers are therefore most directly connected to the flow of program execution and are the first storage space the CPU accesses. However, since they exist inside the CPU, their number and capacity are very limited; but in return, they are extremely fast.

Components of Registers There are multiple registers inside the CPU, and each register plays a different role during the instruction execution process.

PC (Program Counter) Stores the address of the next instruction to be executed. The CPU uses this value to determine where to fetch the next instruction

IR (Instruction Register) Stores the currently executing instruction fetched from memory

MAR (Memory Address Register) Stores the memory address to be accessed

MBR (Memory Buffer Register) Temporarily stores the instruction or data value read from memory

ACC / DR Stores data used in operations and the results of those operations.

Actual arithmetic and logical operations are performed by the ALU These registers and memory are connected through the system bus, and the CPU executes programs by exchanging instructions and data between registers and memory. Ultimately, this structure represents the core of the entire execution flow in which the CPU fetches → interprets → computes → and stores the results of instructions.

3.Cache and Memory

Cache is a high-speed memory located between the CPU and main memory. While the CPU has a very fast processing speed, main memory is relatively slow to access. This speed difference often causes the CPU to stall while waiting for memory to respond, and cache memory is used to alleviate this problem.

Cache is a space that pre-stores instructions and data frequently used by the CPU, allowing it to retrieve needed data directly from the nearby cache rather than going all the way to main memory. In summary, cache is a type of memory, but it should be understood as a performance-oriented structural element designed to help the CPU operate faster.

Memory is a device that stores currently running programs and data. When a program is executed, the program and the data it needs must be loaded into memory, and the CPU reads from this memory to carry out instructions. In other words, memory is the workspace where the CPU performs its operations while the computer is running. When designing a computer system, memory and storage devices are typically selected based on three criteria.

The first is speed; how quickly data can be read and written.

The second is cost; how much it costs per unit of capacity.

The third is volatility; whether data is retained when the power is turned off. Since no single storage device can satisfy all three criteria simultaneously, computer systems combine these characteristics in a hierarchical structure.

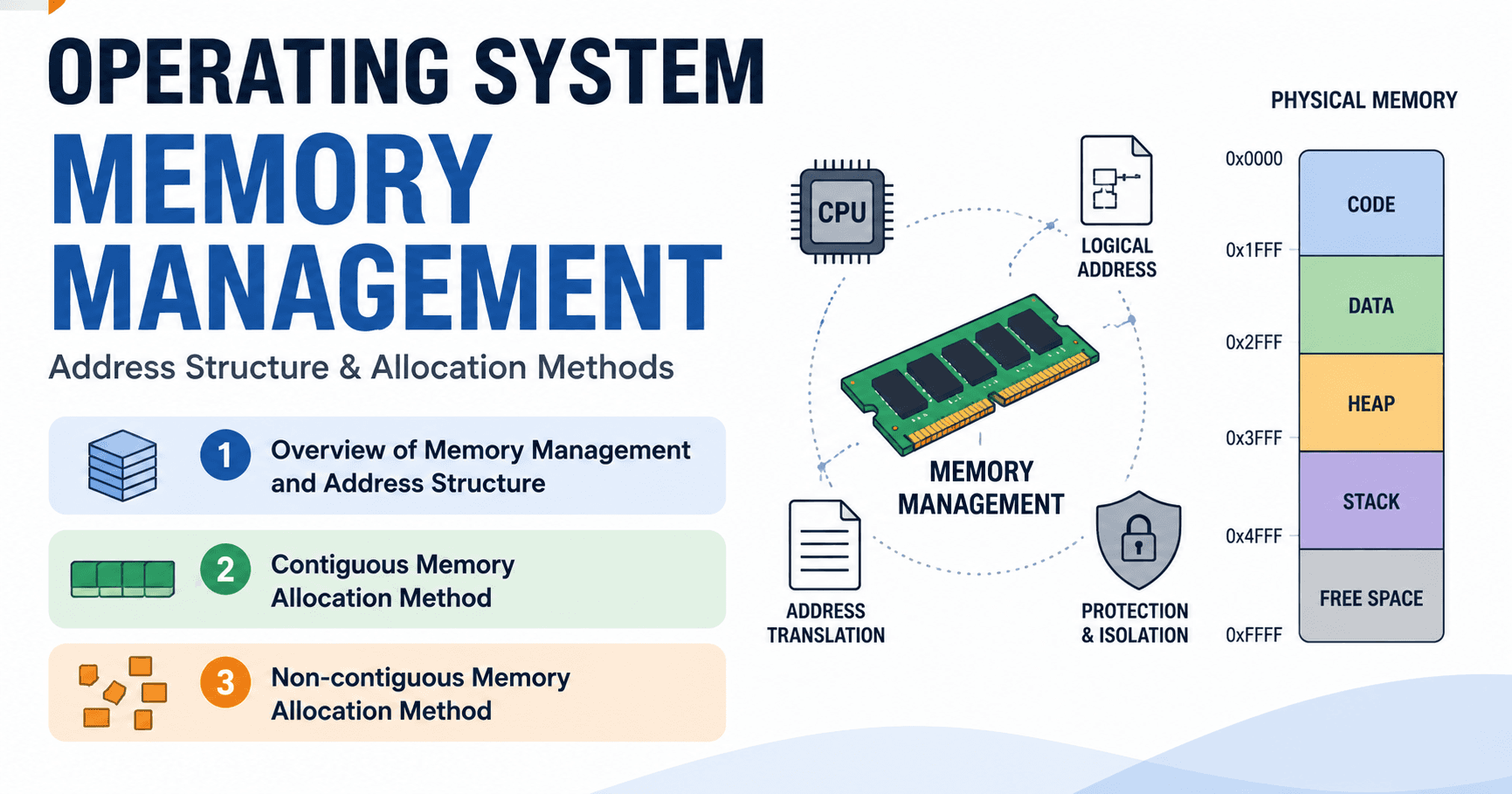

Main memory is the representative storage device that the CPU can directly access, and along with registers, it is the memory most closely connected to the CPU. When executing a program, the program's instructions and data must be located in main memory. Anything not in memory cannot be accessed by the CPU and therefore cannot be executed.

Main memory also serves to store intermediate results and temporary data generated during program execution. Main memory is generally implemented using DRAM technology, making it a volatile memory that can only retain data while power is supplied. Therefore, when the power is turned off, all contents of main memory are lost. As a result, main memory is a core storage space for program execution, but it is not a storage device suited for long-term data retention.

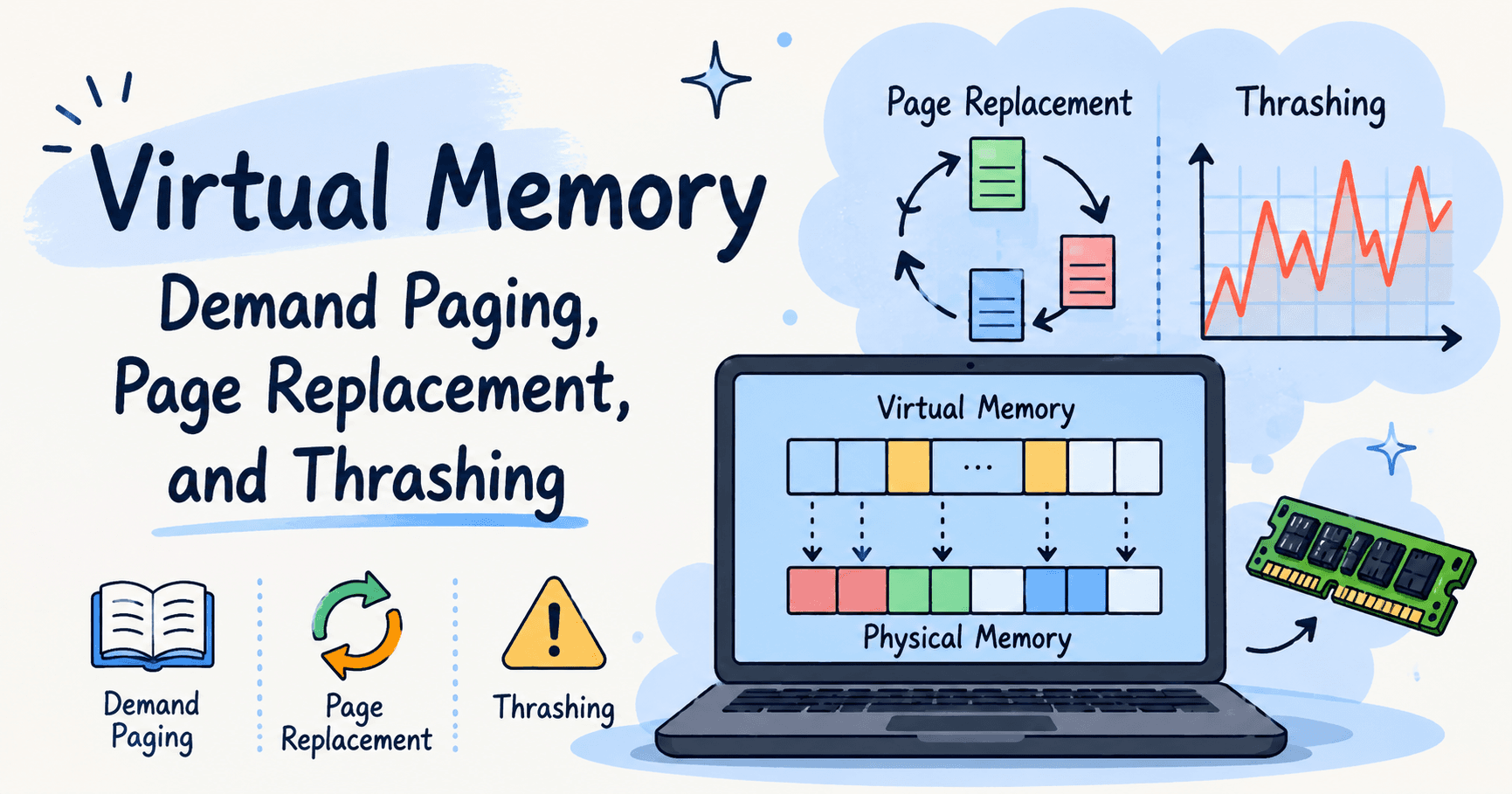

Memory Hierarchy Computer memory is not a single layer but a structure divided into multiple layers. The higher up in the hierarchy, the closer to the CPU; meaning faster speed but smaller capacity. The lower down, the slower the speed, but the larger the capacity and the lower the cost.

The CPU can directly access data in registers, cache, and main memory, but cannot directly access secondary storage. Therefore, programs stored in secondary storage must first be transferred to main memory before they can be executed. The reason for using this hierarchical structure is simple — because it is not possible to make all data storage both fast and cheap, the hierarchy strikes a balance between speed and cost in order to maximize CPU performance.

System Bus The hardware components inside a computer do not operate independently; They work together by exchanging data with one another. The system bus is the pathway that connects the CPU, main memory, and I/O devices into a single system. Rather than connecting each device directly to one another, the system bus is structured so that all devices exchange data and control signals through a common pathway. Whether the CPU is fetching instructions from memory, receiving input data from an I/O device, or storing processed results, all data movement takes place through the system bus. In short, the system bus is the connection structure that ties all hardware inside the computer together into one unified system.

System Bus Signal Types Although the system bus appears to be a single pathway, it is actually divided based on the type of information being transmitted. Depending on the nature of the signals being carried, it is divided into three types: address bus, data bus, and control bus. The address bus is the pathway that tells the CPU where to access. It specifies which location in memory or among devices is to be used. The data bus is the pathway through which actual instructions and data travel. The content being processed moves between the CPU, memory, and I/O devices through this bus. The control bus carries signals that control the manner and sequence of operations, such as read operations, write operations, I/O requests, and whether a task has started or completed.

In summary, the address bus handles where, the data bus handles what, and the control bus handles how — these three buses work together to ensure proper communication between the CPU, memory, and I/O devices.

I/O Devices The CPU cannot communicate with users through main memory alone; this is where I/O devices come in. I/O devices are hardware that connects the computer with the user or the external environment. They serve roles such as receiving input via a keyboard, displaying results on a monitor, and storing or transmitting data through disks or networks. These I/O devices do not connect directly to the CPU or main memory, but instead exchange data through the system bus.

They are broadly divided into three categories based on their role.

Input Devices: Keyboard, Mouse, Camera

Output Devices: Monitor, Printer, Speaker

Storage / Communication: Disk (SSD/HDD), Network Card

- In summary, I/O devices are the components that allow information processed inside the computer to connect with the outside world. I/O Devices and the Operating System I/O devices are extremely diverse and complex in terms of hardware. Their operating methods, speeds, and control mechanisms are all different. If application programs had to directly control every device individually, they would become enormously complex. This is why the operating system takes on this role. Because the operating system manages I/O devices with different characteristics in a consistent manner, application programs do not need to worry about the specific operating methods of each device; they simply work using common operations such as read, write, and output. The actual device control is carried out by the operating system on their behalf. Ultimately, although I/O devices are diverse and complex at the hardware level, the operating system hides that complexity, allowing application programs to operate in the same way regardless of the type of device.

2️⃣ Processing of program and data

1) Command and Data

- Information handled by a computer is broadly divided into data and command. Data refers to values that enter through input devices or are processed during program execution, while instructions are directives telling the CPU what tasks to actually perform; such as "add these values," "store this," or "move to the next step." An important point here is that a program is not one large task, but rather a collection of many individual instructions. These commands are stored at specific memory addresses, and the CPU fetches and executes them one by one in order. In other words, the CPU reads instructions stored in memory one at a time, and uses the necessary data alongside them to carry out the entire program.

- CPU command - Level Operation; The CPU processes one instruction at a time, and each instruction goes through a defined set of steps. First, if the required data is in main memory, it is brought into a register through the bus; this is the preparation phase for performing an operation. Next, the value stored in the register is passed to the ALU, where the actual operation such as ADD is performed. Once the operation is complete, the result is either stored back in a register or, if necessary, moved to memory. The control unit manages the sequence of this entire flow, and the CPU repeats this process for every single command.

In summary, the CPU executes a program by continuously repeating the basic cycle of fetching data, computing, and moving the result. Instruction Execution Cycle The following is the process the CPU goes through when executing a single instruction. Regardless of which command is being executed, the CPU always repeats the same defined sequence.

1. Command Fetch; Based on the address pointed to by the Program Counter (PC), the next command to be executed is retrieved from memory and stored in the Instruction Register (IR)

2. Instruction Decode / PC Update; The CPU interprets what the fetched instruction means, and updates the PC to point to the next instruction. This is the step that determines what to do and where to go next -

3. Operand Fetch. If the instruction requires data, the relevant values are retrieved from memory or registers Instruction Execute.

4. The actual operation; such as arithmetic, logical, or comparison operations - is carried out by hardware including the ALU Result Store.

5. Once the operation is complete, the result is stored in a register or written to memory if necessary Move to Next Instruction - The CPU returns to step 1 for the next instruction, and this cycle repeats continuously.

CPU-Memory Speed Gap; CPU and Memory Speed Difference The most significant problem in computer performance is the speed gap between the CPU and memory. The CPU is extremely fast at interpreting and executing instructions, while main memory is relatively slow to access.

This means the CPU is often ready to execute the next instruction, but the required data has not yet been loaded from memory; leaving the CPU with nothing to do but wait for a memory response.

If this waiting time repeats, overall system performance will suffer no matter how powerful the CPU is. Over time, CPU performance has risen steeply while memory performance has grown only gradually, causing the gap to widen.

- This gap is known as the processor-memory performance gap, and it means that even if CPU speed continues to increase, the overall system performance will be bottlenecked at the point where memory access cannot keep up.

Cache Memory; Cache memory is used to alleviate the speed gap between the CPU and main memory. It is a fast storage space located between the CPU and main memory that temporarily stores instructions and data frequently used by the CPU.

As a result, the CPU can retrieve needed data directly from the cache without accessing main memory every time. Cache is not a replacement for main memory; it is a supplementary storage space designed to assist main memory access and reduce CPU waiting time.

How Cache Memory Works When the CPU needs an instruction or data, it checks the cache first rather than going directly to main memory. If the required data is already in the cache, this is called a cache hit; the CPU can retrieve the data immediately without accessing main memory, enabling very fast processing.

On the other hand, if the data is not in the cache, this is called a cache miss; in this case, the CPU must go all the way to main memory, which takes more time. The retrieved data is then stored in the cache to prepare for future accesses.

In summary, a cache hit is fast and reduces memory access, while a cache miss is slow and requires memory access. The effectiveness of cache depends on how frequently cache hits occur.

Why Cache Works Effectively; Locality The reason cache is effective is due to a property called locality. The tendency for memory access during program execution to be concentrated and repeated within a specific range, rather than occurring randomly. Locality is divided into two types.

Temporal locality refers to the characteristic that data or instructions used recently are likely to be used again in the near future. Variables inside loops or repeatedly executed instructions are typical examples.

Spatial locality refers to the characteristic that if a particular memory location is accessed, nearby instructions or data are also likely to be used soon. Processing an array in order or executing a sequence of consecutive instructions are representative examples; in such cases, loading not just the needed data but also nearby data into the cache at once increases the probability of cache hits on future accesses.

Cache is therefore not simply a fast memory; it is a structure designed to exploit these locality characteristics to reduce memory access and improve performance.

2. Bottleneck

When a program runs, the CPU does not just perform calculations; it uses various resources such as memory access, cache usage, and I/O processing in sequence. If even one of these resources is slow, the entire flow will be delayed at that point. The slowest processing stage that limits overall performance in this way is called a bottleneck. For example, even if the CPU itself is very fast, if memory access is slow, the CPU must wait for the response; and as a result, the overall program speed is determined by memory performance.

To address this, systems use structures such as alleviating memory access delays with cache and improving the processing methods themselves to reduce I/O-related bottlenecks.

Common Flow of I/O Device Processing I/O devices are physically much slower than the CPU. Therefore, if the CPU simply waits whenever an I/O operation occurs, efficiency drops significantly. For this reason, how much the CPU should be involved in I/O processing becomes an important design consideration for the operating system.

In some cases the CPU directly checks the device status, while in others the device sends a completion signal after finishing its task. Depending on the I/O processing method, the CPU can either be tied up waiting or freed to perform other work. The operating system uses various I/O processing methods to maximize system performance.

- Polling; is a method in which the CPU periodically checks the status of an I/O device directly after making a request. The CPU continuously asks the device whether it is ready. The advantage of this method is that it is very simple to implement; it only requires checking device status without complex control logic. However, because the CPU repeatedly checks status without doing any other work even when the device is not yet ready, CPU resources are wasted and overall system efficiency drops.

- Interrupt; With the interrupt method, the CPU does not check device status directly. Instead, after making an I/O request, it hands the task off to the device and continues performing other work. When the device finishes its task, it sends a signal to the CPU ; this signal is called an interrupt. The CPU temporarily pauses its current work, handles the device's completion request, and then returns to its original task. In other words, rather than the CPU continuously checking device status, the device calls the CPU only when needed. Because the CPU can perform other work during I/O wait time, this method uses CPU resources far more efficiently than polling. The flow of the interrupt method is as follows. First, the CPU sends an I/O request to the device and continues other work without waiting. The device processes the requested data, and upon completion sends an interrupt to the CPU. Multiple devices can generate interrupts simultaneously, but the CPU can only handle one interrupt at a time. When an interrupt occurs, the CPU stops its current work and passes control to the kernel. The operating system processes multiple simultaneous interrupts one by one in order of priority. Once all handling is complete, the CPU returns to its original task. The key point is that the CPU does not continuously check the device. There is no resource waste, and the device calls the CPU only when necessary.

DMA (Direct Memory Access); With DMA, the CPU only sets the conditions for the transfer; such as where data should come from, where it should go, and how much should be transferred. The actual data transfer is then handed off to the DMA controller. Once this setup is complete, the CPU no longer participates directly in the data transfer process and moves on to other work. The actual data movement occurs directly between the I/O device and memory without passing through the CPU. When the transfer is complete, the DMA controller generates an interrupt to notify the CPU that the transfer has finished.

In this way, the CPU only configures the transfer once at the beginning, and all subsequent repetitive data movement is handled entirely by the DMA controller. DMA is therefore the most efficient I/O method, consuming almost no CPU resources even for large-volume data transfers.

Summary of I/O Methods I/O processing methods have evolved based on how much CPU involvement is required.