Introduction to Operating Systems: Concepts, Development, and Practice

1️⃣ Concept and role of operating system

2️⃣ Development and characteristics of operating systems

3️⃣ Establishing a practical environment

1️⃣ Concept and role of operating system

Definition of an Operating System

The core software that coordinates all operations between the user and hardware

5 Key Roles

Intermediary between the user and hardware

Controls and manages the execution of applications

Allocates and manages computer resources

Provides input/output control and data management services

Provides an abstracted execution environment that hides the details of hardware

2. Computer System Structure

User (Human, device, other computer)

↕

Software (Operating System) ← Core

↕

Hardware (CPU, memory, storage, I/O device)

These layers can never be skipped.

3. Main Roles of the OS (3 Roles)

| Role | Description |

|---|---|

| Coordinator | Manages execution order of multiple programs and provides a concurrent execution environment |

| Resource Allocator | Distributes CPU, memory, storage, etc. efficiently, fairly, and safely |

| Control Program | Blocks improper resource usage and prevents errors from spreading throughout the system |

4. Goals of OS Development (3 Goals)

Convenience; Hides complex hardware and provides intuitive interfaces (mouse, touch) → Accessible to everyone

Efficiency; Manages resources in a balanced, waste-free manner → Higher throughput, faster response, stable operation

Improved Control Services; Stably controls I/O devices and system state in multi-user and multi-program environments

Main Functions of the OS (7 Functions)

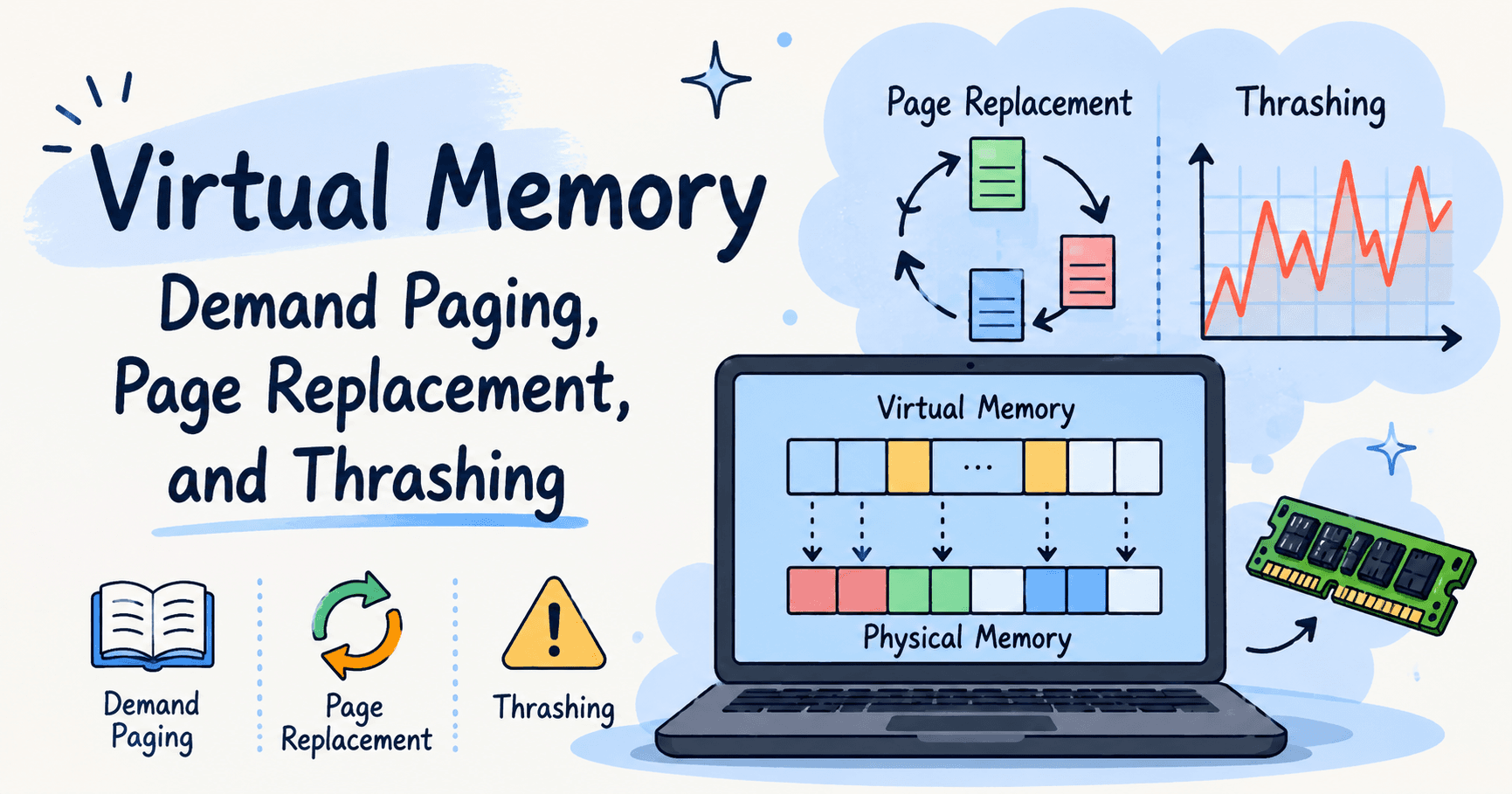

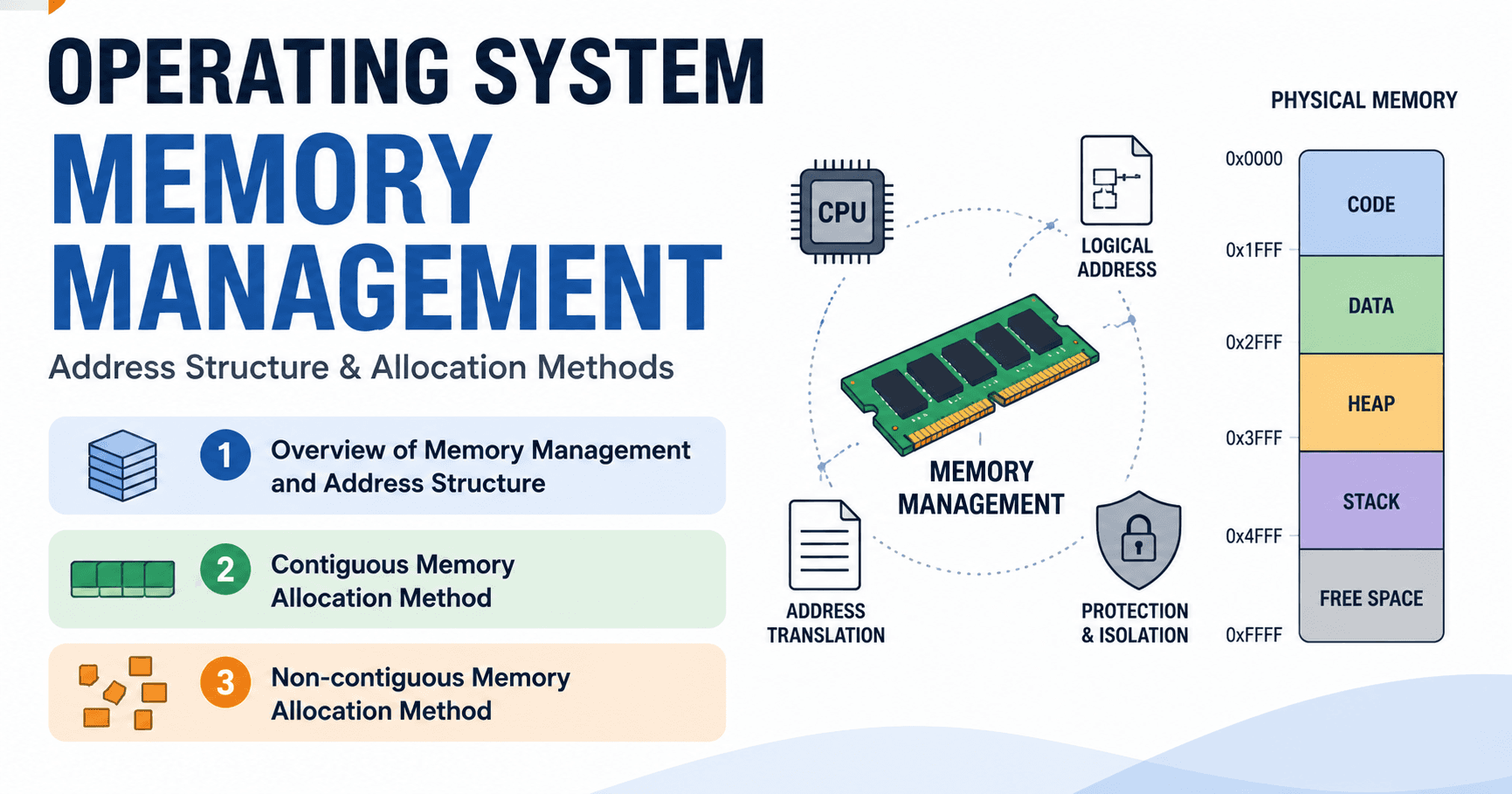

① Memory Management

Manages RAM allocation and reclamation when programs are executed

Memory is volatile → All data is lost when power is turned off

② Secondary Storage Management

Stores data on SSDs and HDDs (non-volatile, large capacity but slow)

Loads only what is needed into main memory, and moves the rest back to storage

Always works in conjunction with memory management

③ Process Management

Manages running programs as individual process units

Divides CPU time into small slices and distributes them to each process

Prevents resource monopolization and conflicts

④ Input/Output Device Management

Abstracts the differences between diverse devices such as keyboards, mice, and printers

Standardizes I/O so that programs can interact with all devices in a uniform way

Prevents conflicts during simultaneous requests and handles them efficiently

⑤ File (Data) Management

Systematically stores and manages documents, photos, executables, and other files

Prevents file corruption even when multiple users or programs access the same file

⑥ System Protection (User Access Control)

Defines and manages permissions for who can do what

Protects the system from malicious access and faulty programs

⑦ Networking and Command Interpreter

Manages external communication such as internet access and file transfers

Translates command-line inputs and button clicks into actual system operations

One-line Summary: An operating system is the core software that makes computers convenient for users, efficient for the system, and stable for the overall environment.

2️⃣ Development and characteristics of operating systems

💡 Development Process of Operation System

Why Did Operating Systems Become Necessary?

In the early days of computing, humans managed everything directly. There were two core problems: setting up tasks and handling input/output took far too long, and since the CPU was fast while I/O was slow, the CPU was constantly sitting idle. In trying to solve this inefficiency, an automated management system — the operating system — was born.

The Evolution of Operating Systems

| Era | Key Development |

|---|---|

| 1940s | No OS; humans managed everything directly |

| 1950s | Batch processing introduced; the beginning of automation |

| 1960s | Multiprogramming & time-sharing; improved CPU efficiency, multi-user support |

| 1970s~ | Distributed processing; multiple computers connected via network → leading to today's cloud computing |

💡Three Major Types of Operating Systems

- Batch Processing OS: Collects jobs and processes them in order. Automation achieved, but CPU efficiency remained low

- Time-Sharing OS: Divides CPU time into tiny slices and alternates between tasks → fast response times, multi-user support

- Distributed OS: Connects multiple computers via a network to operate as a single system → improved performance and reliability, the foundation of cloud computing

💡What Is OS Structure?

OS structure refers to how the functions of an operating system are internally organized and designed. It is not about describing what the OS does on the surface, but rather how it is architected on the inside. The central concept is the kernel, which is responsible for managing core resources such as the CPU and memory, with various services arranged around it. In short, the difference in OS structure comes down to how functions are arranged and separated within the system.

🤔Why Is OS Structure Necessary?

There are two main reasons why a defined operating system structure is needed.

Functional Complexity: Early operating systems had relatively little to do, but today's OS is responsible for processor management, file protection, network handling, and much more. Implementing all of these functions without any structured design would make the system nearly impossible to maintain.

Structure Determines Outcomes: Even with the same set of functions, the choice of structure directly affects the system's performance, stability, extensibility, and maintainability. A poorly designed structure means that modifying a single function could impact the entire system, whereas a well-designed structure allows only the necessary parts to be updated without broader side effects.

Four Types of OS Structure

There are four main types of OS structure, each designed differently depending on its purpose and use environment.

| Structure | Core Idea | Advantages | Disadvantages |

|---|---|---|---|

| Monolithic | All functions packed into a single kernel | Fast processing speed | Errors affect the entire system; hard to maintain |

| Modular | Functions separated as plug-and-play components | Flexible and extensible | Requires careful management of module dependencies |

| Layered | Access only permitted from upper to lower layers in order | Easy to debug; high stability | Performance overhead; lacks flexibility |

| Microkernel | Only core functions in kernel; rest moved to user space | High safety and security | Frequent message passing causes performance |

1) Monolithic Structure

The earliest and simplest form of OS structure. All operating system functions run within a single kernel space, meaning nothing is separated — everything is packed together in one place. Functions within the kernel call each other directly with no intermediate steps, which makes processing very fast with minimal overhead. However, the dependency between functions is extremely high, meaning a problem in one area can spread to the entire system, making it difficult to maintain and debug. In short: fast, but risky. Early UNIX and MS-DOS were built on this structure.

Looking at the structure from the top down, users sit at the very top, followed by user programs such as shells, compilers, and system libraries. When these user programs make a request, they enter the kernel through a system call. Inside the kernel, all functions; including the file system, processor scheduling, memory management, and I/O management; are packed together within a single kernel space. In this structure, the kernel knows everything and manages everything directly.

However, the dependency between functions is extremely high, meaning a problem in one area can spread to the entire system, making maintenance difficult. The larger the structure grows, the harder it becomes to manage. Ultimately, the monolithic structure is one that gains fast performance by placing all functions into a single kernel, but at the cost of safety and maintainability

2) Modular Structure

Born from the question: "What if we kept the monolithic base but managed functions as separate, interchangeable parts?" The modular structure maintains the monolithic foundation but separates functions into independent modules that can be dynamically loaded or removed as needed; much like plugging in or unplugging components. Its greatest strength is balance: it preserves the performance of a monolithic structure while significantly improving flexibility and maintainability. The downside is that dependencies between modules must be carefully managed, and loading a faulty module can still affect kernel stability. Modern UNIX-based systems such as Linux and Solaris use this structure.

3) Layered Structure

A structure with a strict top-to-bottom hierarchy. The OS is divided into multiple layers, and each layer can only interact with the layer directly below it — skipping layers is not allowed. This makes it easy to pinpoint exactly which layer a problem occurred in, improving overall stability and debuggability. However, because every request must pass through each layer one by one, the number of calls increases and performance suffers. The rigid rules between layers also make it inflexible when adding new features or modifying existing ones. As a result, while layered structure is easy to understand and well-organized in theory, it is rarely used in commercial operating systems due to its performance limitations. It has been used in educational OS environments such as THE operating system.

Looking at the structure from top to bottom, the layers are stacked as follows. The most important point here is that each layer can only interact with the layer directly below it. Skipping any intermediate layer is strictly impossible. While this structure is very easy to understand, applying it directly to a real operating system places a significant burden in terms of both performance and flexibility. In short, the layered structure is well-suited for understanding and design, but has clear limitations when it comes to real-world performance.

4) Microkernel Structure

Starts from the idea of "leave only the essentials in the kernel." Only the most fundamental functions - process management, memory management, and inter-process communication ; remain in the kernel. Everything else, such as file systems and device management, is moved out to user space. This results in a smaller kernel with significantly improved safety and security. The tradeoff is that the kernel and user space must frequently exchange messages, which introduces some performance overhead. Real-world examples include Mach, MINIX, and QNX — with QNX being widely used in safety-critical fields such as automotive and medical devices. macOS and Windows, on the other hand, use a hybrid structure that incorporates only some microkernel concepts rather than adopting it fully.

The structure can be mapped out as follows. Only the core functions remain in the kernel, while everything else; such as the file system and device management is moved out to user space. Services in user space can only communicate with the kernel through system calls. As a result, the structure is cleanly separated, and even when a problem occurs, its impact does not spread widely across the system. In short, this is a structure that minimizes the kernel in order to maximize stability and extensibility.

🤔Why Do Modern Operating Systems Use a Hybrid Structure?

네, 해당 부분도 한국어 요약과 영어 번역 함께 정리해드립니다!

한국어

왜 현대 운영체제는 혼합 구조인가?

이유는 크게 두 가지입니다.

첫 번째, 구조마다 장단점이 너무 뚜렷하기 때문입니다. 단일 구조는 성능은 좋지만 관리가 어렵고, 마이크로 커널은 안정성은 좋지만 성능이 아쉬우며, 계층 구조는 이해하기는 쉽지만 유연성이 부족합니다. 하나의 구조만으로는 모든 요구를 동시에 만족시키기 어렵습니다.

두 번째, 운영체제에 대한 요구가 너무 많아졌기 때문입니다. 현대 운영체제는 단순히 작동만 하면 되는 수준이 아닙니다. 높은 성능, 안정성과 보안, 다양한 하드웨어 지원, 지속적인 기능 확장까지 모두 요구받습니다. 현실적으로 단 하나의 구조가 이 모든 것을 충족시키기는 불가능에 가깝습니다. 그래서 운영체제는 상황에 맞게 구조를 섞는 방법을 선택하게 되었습니다.

결론적으로 현대 운영체제는 하나의 구조를 고집하지 않고, 여러 구조의 장점을 취하고 단점은 최대한 줄이기 위해 혼합 구조를 사용합니다.

리눅스: 모놀리식 구조 기반 + 모듈 구조 결합

Windows·macOS: 모놀리식 + 마이크로 커널 개념 혼합

핵심 결론: 현대 운영체제는 완벽한 구조를 찾는 것이 아닌, 현실적인 타협을 선택한 결과물이다.

🤔Why Do Modern Operating Systems Use a Hybrid Structure?

There are two main reasons.

First, every structure has clear and distinct trade-offs. The monolithic structure offers great performance but is difficult to manage. The microkernel structure is highly stable but falls short in performance. The layered structure is easy to understand but lacks flexibility. No single structure can realistically satisfy all requirements at the same time.

Second, the demands placed on operating systems have grown enormously. Modern operating systems are no longer expected to simply run; they must deliver high performance, strong stability and security, support for a wide variety of hardware, and continuous feature expansion all at once. It is practically impossible for any single structure to meet all of these demands. As a result, operating systems have chosen to mix structures depending on the situation.

In conclusion, modern operating systems do not commit to a single structure. Instead, they adopt the strengths of multiple structures while minimizing their respective weaknesses through a hybrid approach.

Linux: Monolithic base + Modular structure combined

Windows & macOS: Monolithic + Microkernel concepts blended together

Key Takeaway: Modern operating systems are not the result of finding a perfect structure — they are the product of practical compromise.

3️⃣ Establishing a practical environment

💡Virtual Machines

1. What Is a Virtual Machine?

A virtual machine (VM) is a technology that divides the resources of a single physical computer into multiple independent execution environments. Using virtualization software, you can create several virtual computers on one laptop, each running a different operating system just like a real machine. Since each virtual machine operates independently, any problem that occurs inside a VM does not affect the actual host laptop.

2. Why Use Virtual Machines?

Directly installing an operating system on a personal PC can lead to the following problems.

Incorrect settings can prevent the system from booting or cause system damage

Once a problem occurs, restoring the original state is difficult

Switching between multiple operating systems for practice is a significant burden on a personal PC

Virtual machines solve all of these issues. Multiple operating systems can be practiced safely on a single PC, and recovery is easy even if something goes wrong.

3. Host-Based Virtualization

This course uses a host-based virtualization approach, where virtualization software is installed on top of the existing operating system and virtual machines are run within it. This method allows direct installation on a personal PC and easy recovery, making it the most suitable approach for hands-on practice.

The practice workflow is as follows.

Install Virtualization Software → Create Virtual Machine → Install Ubuntu OS

4. Virtualization Software: VirtualBox

This course uses VirtualBox for hands-on practice.

Advantages: Free to use, relatively simple installation and setup, beginner-friendly

Note: VirtualBox does not work properly — or at all — on MacBooks with Apple Silicon chips (M1·M2·M3). Students with these devices must use alternative virtualization software.

5. Linux Distribution for Practice: Ubuntu

The operating system to be installed inside the virtual machine is Ubuntu Linux. It was chosen for three reasons.

Provides a user-friendly interface accessible even to beginners

Well-supported by learning resources and an active community

Relatively straightforward to install and use in a virtual machine environment

At the end of this chaper - I was able to install Ubuntu in the VM using VirtualBox! yay.